Enclave creates encrypted connections between networked systems. Our socket implementation aims to move as much data as possible for the lowest CPU cost. In practical terms, we want to avoid consuming CPU cycles but get as close as we can to line speed in terms of throughput.

The preferred transport Enclave uses to build encrypted tunnels between systems is UDP; falling back to TCP if UDP communication is not possible.

Enclave operates at Layer 2 (carrying Ethernet frames across tunnels). Unreliable UDP datagrams are the preferred transport for tunnelled traffic because it’s highly likely that tunnels will themselves carry encapsulated TCP traffic. Carrying encapsulated TCP traffic inside a TCP connection can lead to a mismatch of timers, such that upper and lower TCP tunnels mismatch creating a situation where the upper layer can queue up more retransmissions than the lower layer process adversely impacting throughput of the link. For a more in-depth explanation of the TCP over TCP problem, see Why TCP Over TCP Is A Bad Idea by Olaf Titz.

So, what are our requirements for our UDP sockets in the Enclave world? Basically, there are three:

- Achieve as close to line speed as possible when sending and receiving.

- Try to use processor time efficiently and not waste cycles.

- Sustain large numbers of concurrent UDP sockets.

The guide below outlines how our C# UDP socket implementation comfortably saturates a 1Gbps connection and keeps CPU usage to a minimum.

This is a follow-up post to my original post on doing high-performance UDP in .NET 5. In .NET 5, we had to add a pretty complicated custom implementation of SocketAsyncEventArgs. Since then, in .NET 6 the .NET runtime team have added new functionality to the Socket class that renders that no longer necessary, and this post reflects the changes.

If you’re still on .NET 5 (you should probably upgrade since .NET 6 is LTS), head over to the original.

If you want to jump ahead, the complete working example code is available in our github: https://github.com/enclave-networks/research.udp-perf. You can also just jump to the results below if you wish.

The Fundamentals

Ok, so with our requirements in mind, what are the basic guiding principles we are going to apply to get the best possible performance out of our sockets?

Use Asynchronous IO

It’s relatively well known now (especially with all the async/await behaviour in .NET) that to achieve a significant amount of concurrent socket IO, you need

to be using Asynchronous IO.

The basic concept is that rather than initiate an IO operation and block the thread until it completes, with async IO we initiate an IO operation and get told (possibly on a different thread) when that operation completes. This reduces thread usage, and stops us exhausting the .NET thread pool when we have a lot of sockets.

On Windows, Asynchronous IO is presented via IO Completion Ports (IOCP), and on Linux through the epoll interface.

Luckily, all these platform-specific mechanics are entirely abstracted away for us by the .NET Runtime. However, using the asynchronous functionality in .NET Sockets in the ‘right’ way is really important when you’re trying to squeeze out all the performance you can.

As well as large amounts of concurrent IO, if done correctly async IO can also achieve very high throughput to/from a single endpoint, as we’ll see very shortly.

Avoid Copying Data

We want to avoid copying data to and from buffers whenever possible. This means no taking copies of data before processing received data, and no creating new byte arrays to get one the right size!

It’s much easier to avoid copying byte arrays these days thanks to the Memory<byte> and Span<byte> types that let you represent ‘slices’ of memory without

re-allocating anything:

Old unpleasantness with a new byte[] allocation:

1

2

3

4

5

var recvBuffer = new byte[1024];

int bytesRead = ReceiveSomeData(recvBuffer);

var actualReceived = new byte[bytesRead];

Array.Copy(recvBuffer, actualRecvd, bytesRead);

New goodness:

1

2

3

4

5

Memory<byte> recvBuffer = new byte[1024];

int bytesRead = ReceiveSomeData(recvBuffer);

// No allocations to get a subsection of the buffer.

var actualReceived = recvBuffer.Slice(0, bytesRead);

Avoid Allocating Memory

When you’re trying to squeeze every last bit of CPU time, it’s worth trying to avoid allocating objects and memory from the heap where possible. This avoids both:

- The time it takes to actually allocate objects; the GC is fast, but allocating a new object from Gen0 still takes some time.

- Reduce GC pressure. The more you allocate, the more the GC has to clean up; again, this process is fast, but still consumes CPU time.

.NET 6 has a lot of options to help with this, but one of the features that helps us the most when we’re doing our async IO is the availability of ValueTask and IValueTaskSource, which avoids additional allocations where possible (as opposed to Task).

Using the right Socket methods

The .NET Socket has a number of overloads of SendTo, SendToAsync, ReceiveFrom and ReceiveFromAsync. Which one you choose to use has a huge impact on your performance.

If you take what we’ve mentioned so far around allocations and the use of Memory<byte> and ValueTask, that helps us select the methods we want to use.

First up is the ReceiveFromAsync method. The one we want (from a performance perspective) takes a Memory<byte> argument and returns a ValueTask<SocketReceiveFromResult>.

1

2

3

4

5

public ValueTask<SocketReceiveFromResult> ReceiveFromAsync(

Memory<byte> buffer,

SocketFlags socketFlags,

EndPoint remoteEndPoint,

CancellationToken cancellationToken = default);

Set remoteEndPoint to an instance of

new IPEndPoint(IPAddress.Any, 0)to receive packets from any sender.

The Memory<byte> buffer you provide should be big-enough to fit an entire UDP packet, e.g. 65527 bytes, otherwise the received packet may be truncated and you will get a WSAEMSGSIZE error from the operation.

The SocketReceiveFromResult is a struct that contains the EndPoint that sent the packet, plus the number of bytes that were read into the provided buffer. You can use this

to get a slice of the buffer without copying, that contains just the received packet:

1

2

3

4

5

6

Memory<byte> buffer = new byte[65527];

SocketReceiveFromResult recvResult = await udpSocket.ReceiveFromAsync(buffer, SocketFlags.None, _blankEndpoint);

// This doesn't copy.

var recvPacket = buffer.Slice(0, recvResult.ReceivedBytes);

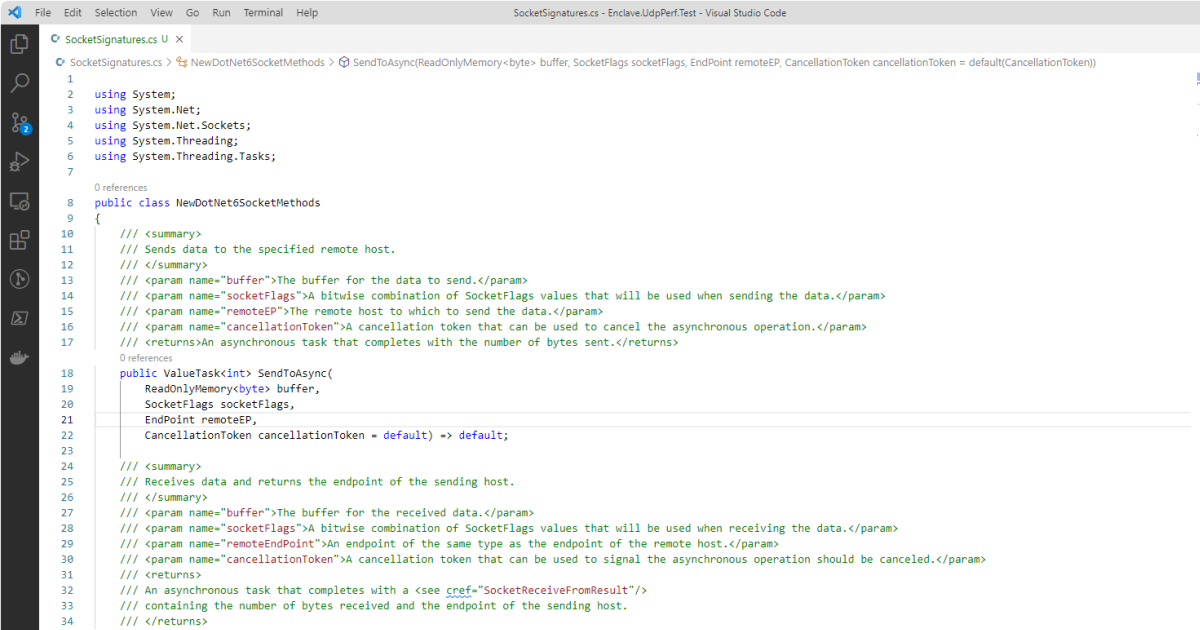

For the send operation, we want SendToAsync. It accepts a read-only buffer, and returns the number of bytes sent when the ValueTask completes.

1

2

3

4

5

public ValueTask<int> SendToAsync(

ReadOnlyMemory<byte> buffer,

SocketFlags socketFlags,

EndPoint remoteEP,

CancellationToken cancellationToken = default);

AwaitableSocketAsyncEventArgs

The use of ValueTask allows these socket methods to reduce the number of allocations for receiving packets to zero in most cases.

How does it do this? Just using ValueTask isn’t enough to get allocations to zero, right?

The trick is that behind the scenes the Socket class uses a shared instance of an internal AwaitableSocketAsyncEventArgs class which implements the super-interesting IValueTaskSource interface.

There are excellent blog posts on IValueTaskSource by more learned people than myself, but the basic concept is that you create a class that implements three methods:

GetStatus: Checks the status of theValueTask(Pending, Succeeded, Faulted, etc.)GetResult: Gets the result of the operation (e.g. if you are usingValueTask<int>, you’d return anintfrom this method).OnCompleted: This is the chunky one; this method is called when someone usesawaiton yourValueTask, and it’s responsible for invoking the provided continuation parameter when our asynchronous operation completed.

Because the instance of AwaitableSocketAsyncEventArgs the Socket class uses is reset after each IO operation, each call to ReceiveAsync will use the same object.

Note; if you have a significant amount of concurrent IO on the same socket in one direction, for example, a significant number of concurrent writes, you might notice some slowdown caused by allocating instances of

AwaitableSocketAsyncEventArgs.Only a single shared instance of that class is held by the socket for each IO ‘direction’ (one for read, one for writes); if an IO operation is initiated while the other is pending, it will allocate a new instance.

Using the Pinned Object Heap (POH)

Every time .NET needs to pass a block of memory managed by the garbage collector to native code (e.g. the Win32 recvfrom method), it must “pin” that memory, so that while the native code is executing, the GC does not move that memory during compaction.

Pinning a buffer allocated in the normal SOH (Small Object Heap) or LOH (Large Object Heap) repeatedly has overheads, not just with the pinning operation itself, but it can slow down the GC to have a lot of pinned objects in the SOH and LOH, because the GC has to effectively “work around” them.

In .NET 5, the runtime added the concept of a Pinned Object Heap (POH) which is an area designed to hold buffers intended for native IO operations; it was initially needed for improving the performance of sockets in ASP.NET Core HTTP request handling.

You can allocate a pinned array of bytes like this:

1

2

byte[] buffer = GC.AllocateArray<byte>(length: 65527, pinned: true);

Memory<byte> bufferMem = buffer.AsMemory();

As the name might suggest, the POH never compacts or moves the memory allocated there, and so saves CPU time in the GC and during IO operations.

The POH is designed for small numbers of large buffers, as opposed to a huge number of individual buffers. If you need a lot of smaller pinned buffers, it can be wise to allocate a large array from the POH, then sub-divide it into individual buffers using

Memory<byte>.Slice.

In our code used for the tests below, we allocate a single receive buffer from the POH, and use it for every read.

The Pay-Off

Okay, so it’s all well and good going on about allocation-this, and ValueTask-that, but what do we get for our efforts?

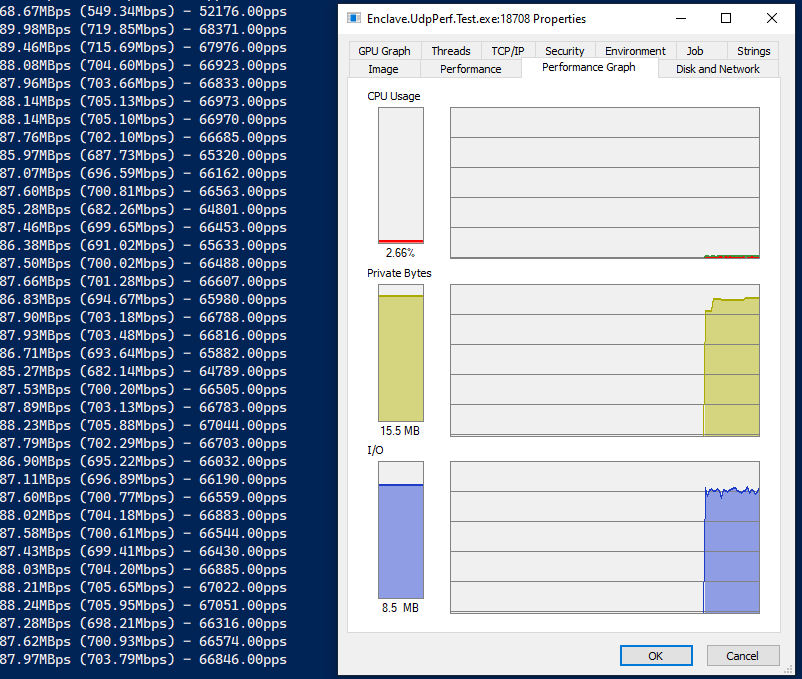

Here’s some very basic performance tests where I send data across a gigabit link, and monitor the CPU usage as I do so.

My machine for testing has a 12-core Intel i7-9750H@2.60GHz powering it (for reference).

You can run these tests yourselves using the Enclave.UdpPerf.Test project in the linked GitHub repo. You may need to disable/reconfigure your firewall on the receive side.

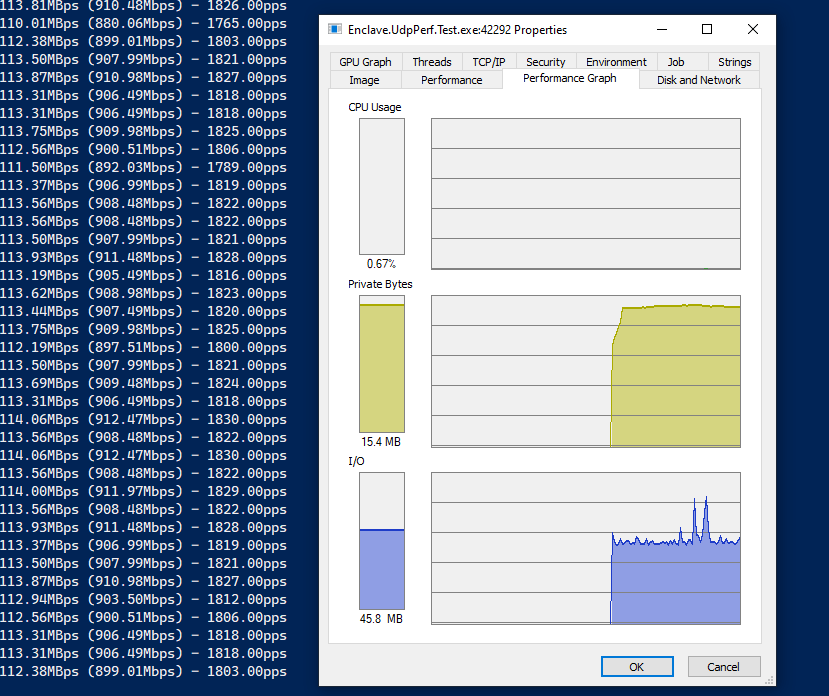

Big Packets

First up, send performance, with 65k packets:

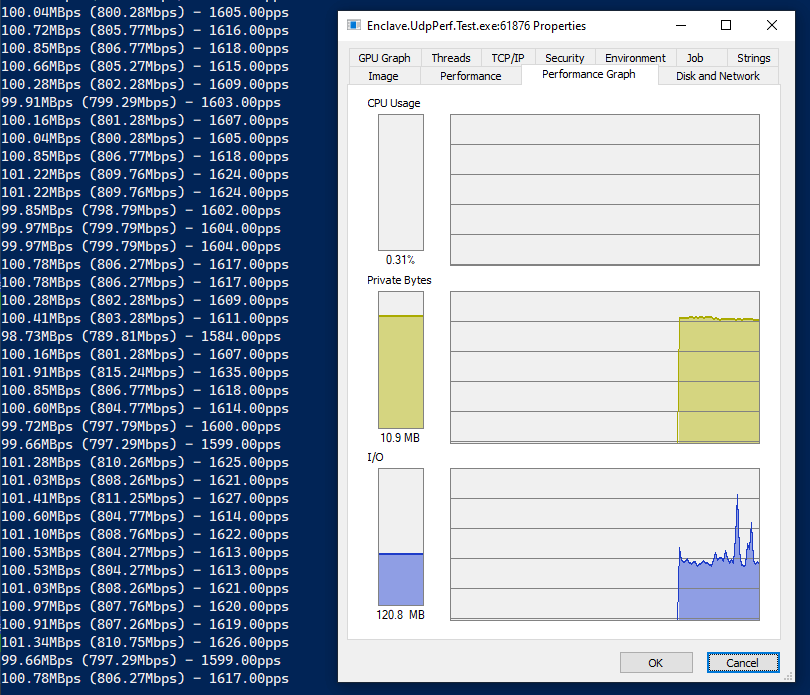

… and receive performance, on the same machine, 65k packets:

So, you can see that we’re averaging around 900Mbps on the send, and 800Mbps on the receive. Processing around 1800 packets-per-second. The drop in receive rates is most likely UDP packet loss over the link, or buffers on the receive side getting full.

Importantly, the CPU is barely ticking over, and our memory stays flat at about 15MB.

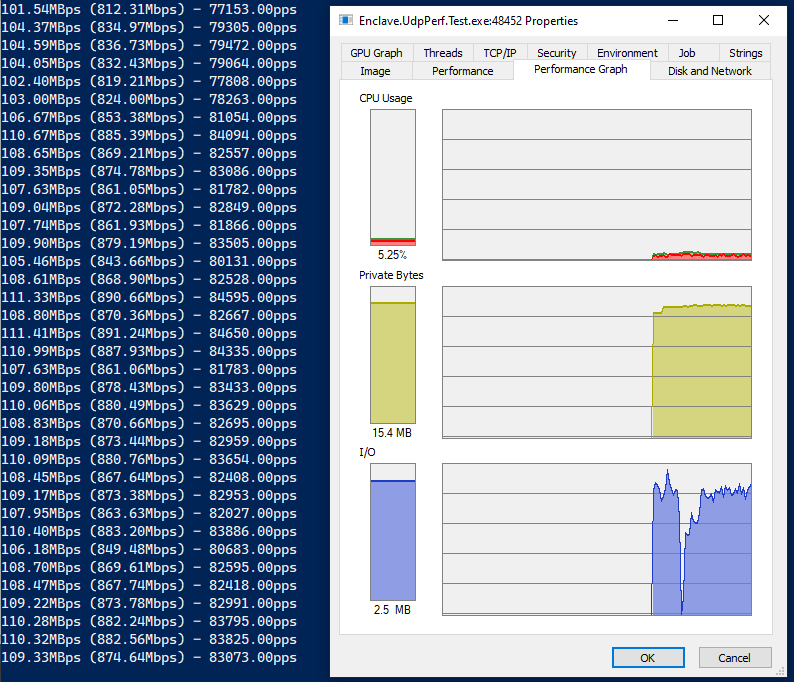

Small Packets

Let’s bring the packet size down to a realistic small packet size, to fit in a single Ethernet frame, so about 1380 bytes of data in each UDP packet. We should be able to fit a lot more packets over the link at that size, so let’s see with send performance first:

…and receive, on the same machine, 1380 byte packets:

Look at those packet-per-second numbers! We’re up to around 82,000 packets per second, and we’re still only using about 5% of the overall CPU, and the receive side is even lower! Great stuff.

Remaining Allocation Frustration

There’s only one thing that eludes me with this solution, and it is that we are so close to being completely allocation-free on the send and receive paths, but we aren’t quite. There are still objects allocated during the receive path, but who’s the culprit?

Profiling shows us that an IPEndPoint instance is needed for each result of ReceiveFromAsync, to contain the source of the packet we received. Since IPEndPoint

is a class, it has to be new-ed up every time, which is the source of our allocations. At some point if would be nice to see a struct version of IPEndPoint that might help us cut back on the few remaining allocations.

There is an open issue in the runtime repo tracking the desire for allocation-free UDP APIs, but I suspect we may be waiting a little while.

Wrap-Up

Hopefully this gives you some tips for using high-performance UDP sockets (and perhaps IO in general) in your app. As linked at the start, you can grab all this code on our Github: https://github.com/enclave-networks/research.udp-perf.